Okay: for a long time I was too scared to address this issue again. But part of the reason for my ongoing work with eFIP was to arm myself for another encounter with this demon. . . .

Okay: for a long time I was too scared to address this issue again. But part of the reason for my ongoing work with eFIP was to arm myself for another encounter with this demon. . . .

I’m talking about the Gould conjecture.

This is the famous sports-analytics theory, propounded by evolutionary biologist Stephen Jay Gould, that differences in the quality or skill of athletes at the elite level tends to diminish over time. He tossed out a couple of mechanisms. One is the deepening talent pool, which one would expect to generate a higher density of top-flight performers, who will start to bunch up around the upper-limit of human sports capability.

But another, even more interesting idea, is that selection effects are at work. Once it becomes clear that a particular kind of skill—or maybe a type of technique or training—is creating a competitive advantage, players devote themselves to developing it. Likewise, teams will devote themselves to acquiring players who have it. Finally, competitors will become motivated to devising neutralizing strategies or techniques—and teams to stocking their rosters with those types too. So differences in outcomes attributable to the skill, technique, or whathaveyou diminish and variance among competitors shrink.

Gould presented this argument to explain the extinction of the .400 hitter. But it’s actually kind of hard to get how his position works here. Competition among hitters isn’t zero sum. If they all become increasingly better, variance will decline as more and more of them bat .400. Only if pitchers and fielders are becoming uniformly better would we expect .400 hitting to decline—and that’s assuming nothing external to player quality (including emphasis on other, more productive skills than hitting consistency) is driving averages down. Gould’s exposition didn’t really work all this out clearly, in my view.

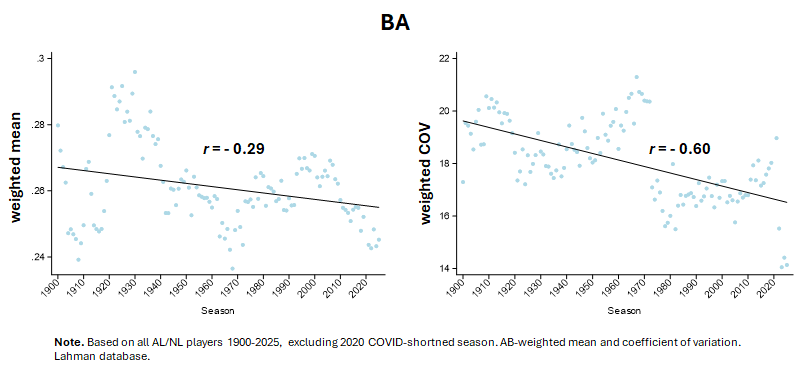

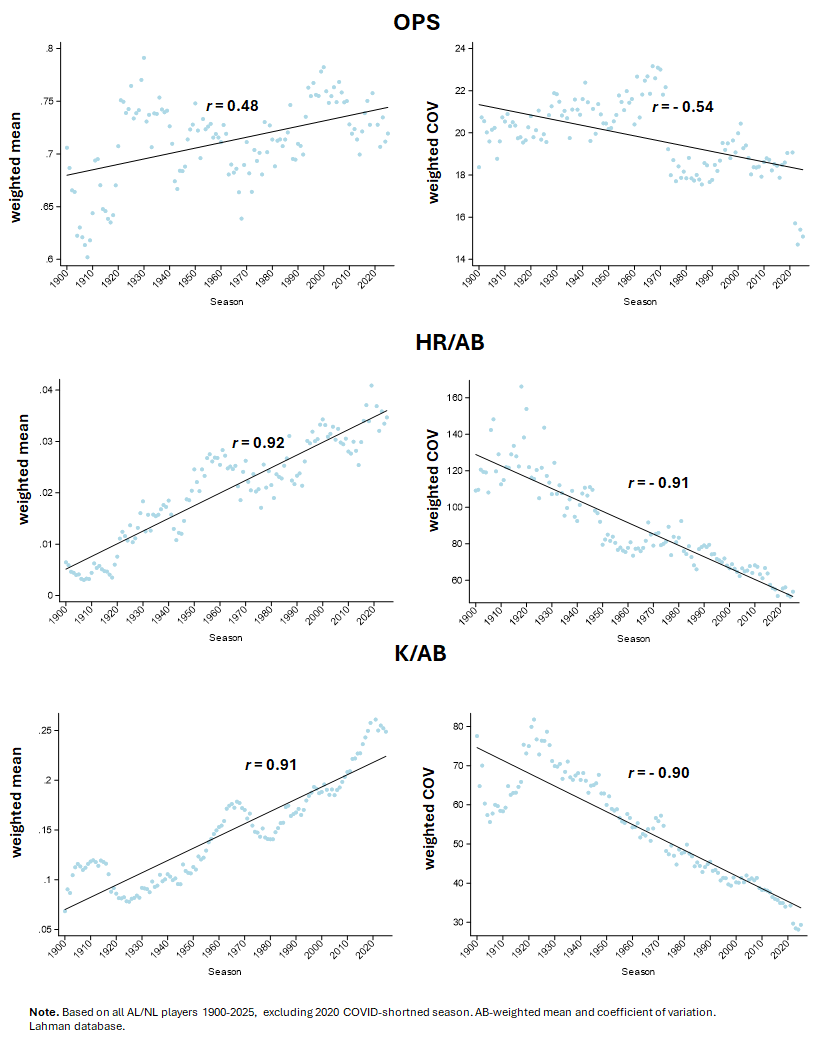

But anyway, if you examine these figures, which juxtapose changes in AL/NL mean batting averages with individual variances in the same, you’ll see what he was talking about.

(In case you are wondering, the Coefficient of Variance is a standardized version of a “standard deviation,” formed by dividing the standard deviation by the mean and multiplying by 100; it is a form of rescaling that is useful when the mean changes, in which case a change in standard deviation is not in itself a straightforward indicator of changing variance. COV can’t be used when the mean is zero—but in that case, the consistency of the mean obviates the need for standardizing standard deviation anyway.)

The phenomenon, moreover, is ubiquitous. Here’s some more evidence of it in hitting performance:

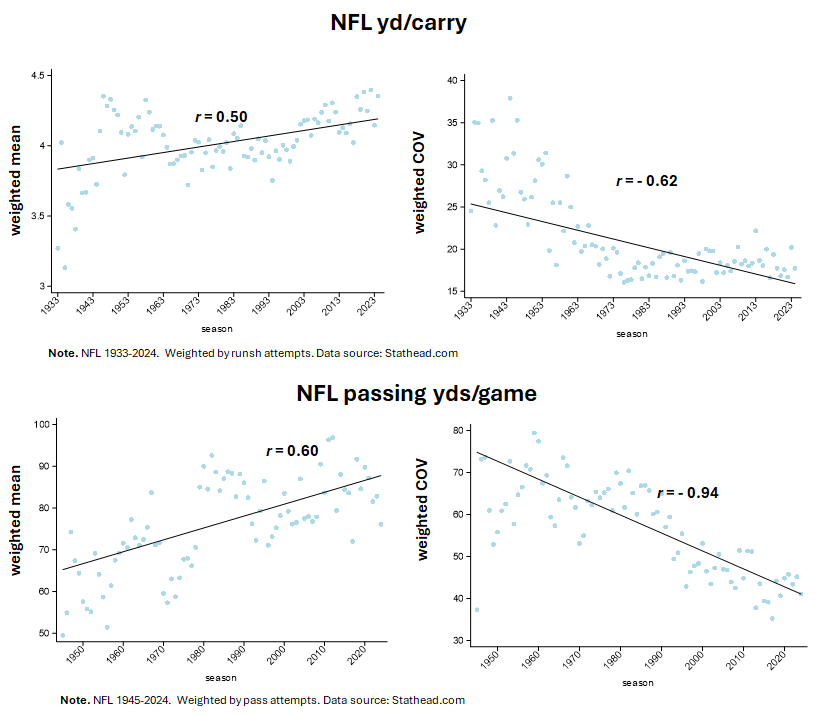

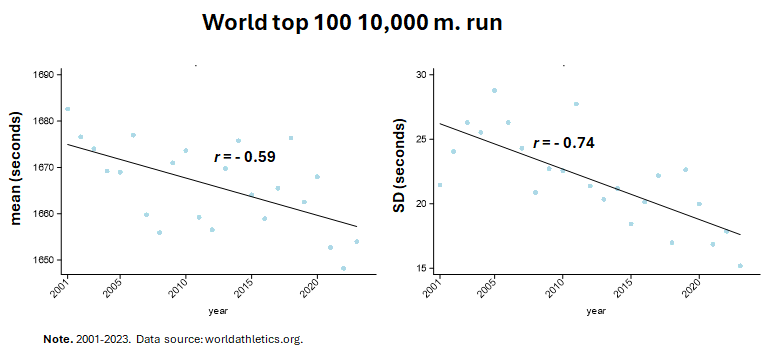

Moreover, because the mechanisms involved aren’t distinctive of baseball, we should expect to see Gould’s conjecture—persistently declining variance as a sport matures—occurring in other athletic realms. And we definitely do!

Okay, but get ready to get freaked out.

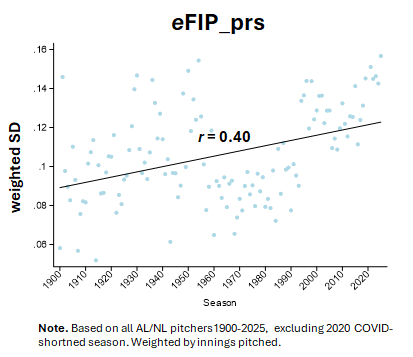

There’s an exception: baseball pitching!

The other day, I introduced eFIP, a pitcher skill estimator that uses empirical weights to determine the run-avoiding consequence of fielding-independent outcomes (viz., strikeouts, walks, home runs allowed, and hit batters). There’s not any real value in looking at the mean, because the estimator measures runs-saved above average, which has a league mean of 0 by design. But look at its SD!

Maybe you think this is something weird about eFIP—perhaps even evidence that is defective in some way. But it turns out that other measures of pitching proficiency show the same thing.

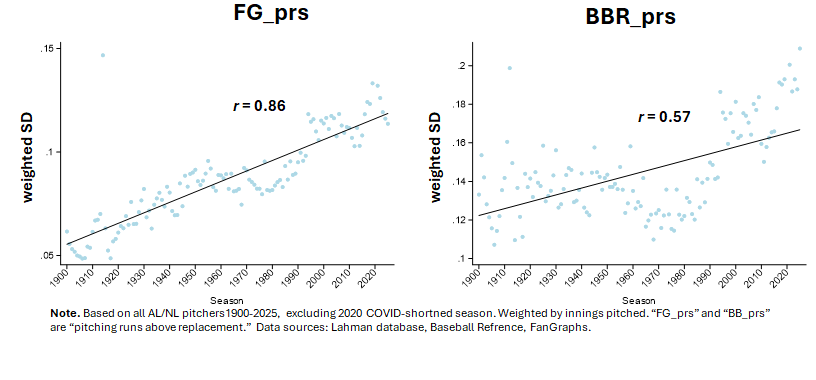

Consider Baseball Reference’s and FanGraphs’s runs saved measures:

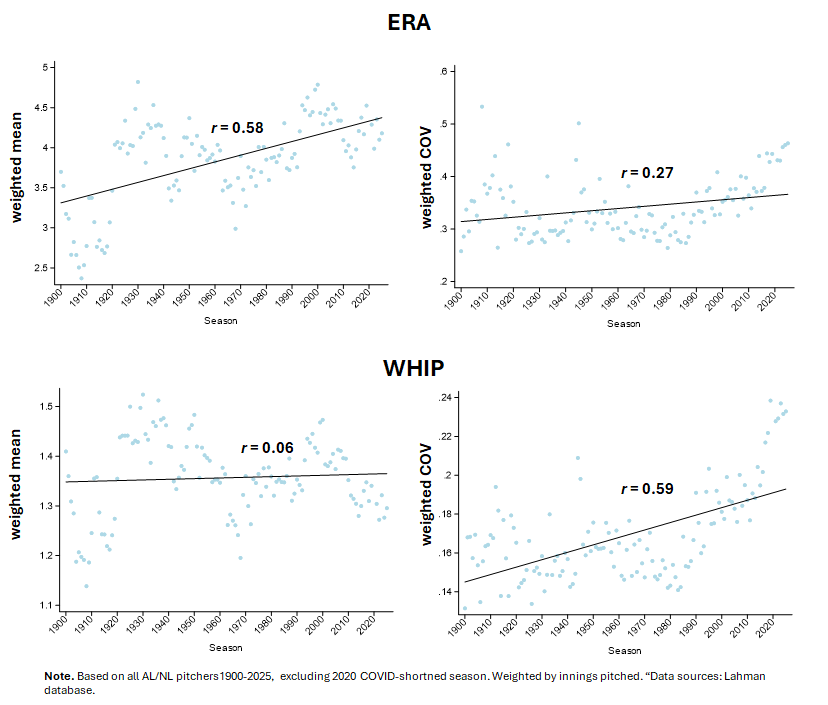

Although they are pretty weak compared to either eFIP or to the BBR and FG runs-avoided measures, you can even see the same counter-Gouldian trend in ERA and WHIP!

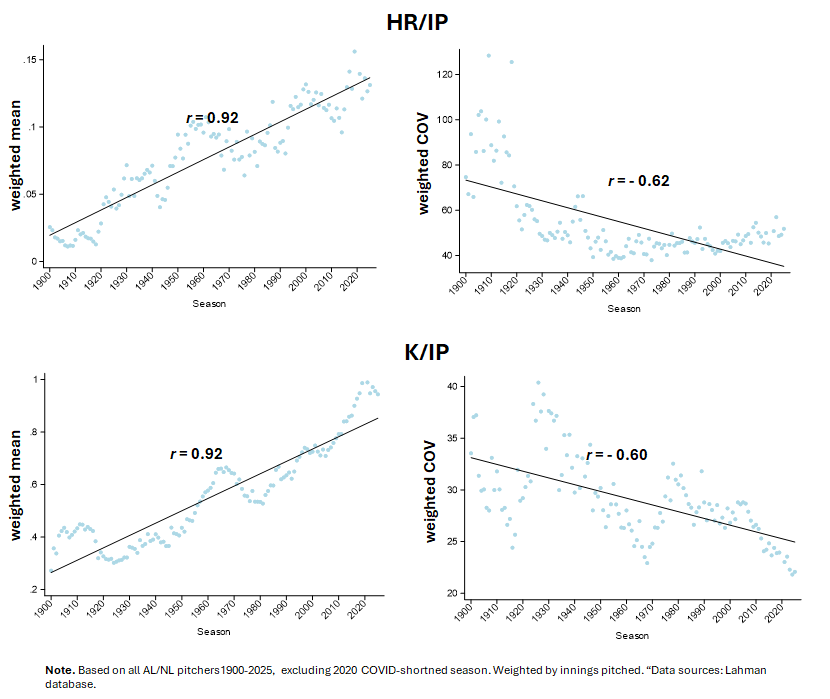

Perhaps you think this is a result of the changing usage trends in MLB, which have resulted in dramatic declines in the number of innings that starters pitch. More pitchers throwing fewer innings could increase variance, sure. But if that were so, then one wouldn’t expect this, would one?

Pitchers are becoming more uniform in their tendencies to strike out hitters and avoid home runs—so the increasing variance in runs-saved estimators can’t be blamed on measurement imprecision associated with shorter mound stints.

But ruling out this explanation only compounds the mystery: if we are seeing declining variance in the indicators of pitching proficiency, why aren’t we in the indicator-derived estimators of pitcher skill in run prevention? That can happen, easily enough, mathematically, since the covariance of the indicators can be increasing in variance even as each of them becomes less variable. But it’s still just weird to think that pitchers are becoming more uniform in competencies like punching hitters out and avoiding dingers yet more disparate in what ultimately matters: keeping runs from crossing the plate!

But ruling out this explanation only compounds the mystery: if we are seeing declining variance in the indicators of pitching proficiency, why aren’t we in the indicator-derived estimators of pitcher skill in run prevention? That can happen, easily enough, mathematically, since the covariance of the indicators can be increasing in variance even as each of them becomes less variable. But it’s still just weird to think that pitchers are becoming more uniform in competencies like punching hitters out and avoiding dingers yet more disparate in what ultimately matters: keeping runs from crossing the plate!

This exception, moreover, is definitely hard to reconcile with Gould’s motivation for forming his conjecture: explaining the extinction of .400 hitting. If pitchers aren’t becoming uniformly more skillful, what is holding batting averages down as improvement in hitting ability becomes more uniform across players? True, high averages aren’t valued very much anymore; but then why is variance in hitters’ averages still declining? If the trait is no longer being selected on, why isn’t variance rising for this metric?!

Actually, my resolve to get to the bottom of this is part of the reason I returned to the pitcher-estimator problem. I feel like work on other projects has given me new proficiencies and insights that might help me to do what I was helpless to achieve before in addressing this anomaly. It might even lead to more general ideas about how athletic performance evolves. . . .

Ready to tackle this one with me?

Ready … set … go!